Classification of signals

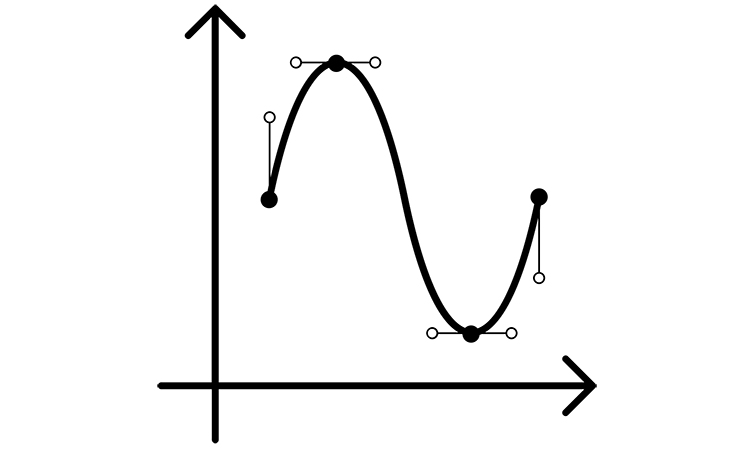

Classification of signals: Even and odd signals

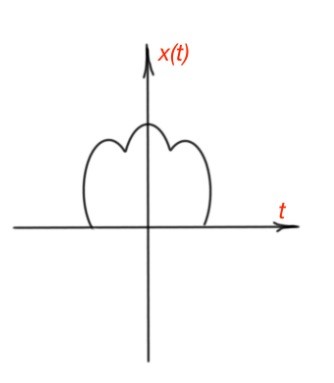

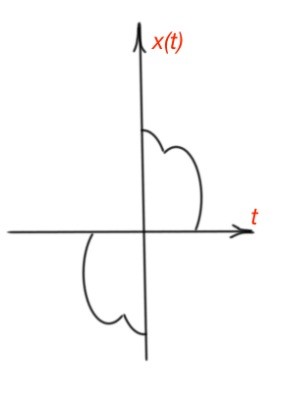

This post covers topic of classification of signals.The continuous-time and discrete-time electromagnetic signals are called even if they are identical to their counterparts on the time scale, i.e. and . The continuous-time and discrete-time signals are called odd if they are opposite to their counterparts on the time scale, i.e. and . The feature of odd signals is that zero is equal when and . The odd and even signals are depicted below for continuous-time signals.

Any signal can be broken into even and odd parts: and .

Classification of signals: Exponential and sinusoidal electromagnetic signals

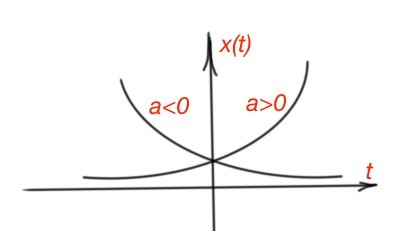

The continuous-time exponential signals are where and a are complex numbers. If and a are real complex numbers then the function is real and there are two behaviours of the function: if a is positive is an increasing exponential function, if the a is negative, then is a decreasing exponential function. (Figure 3).

If a is an imaginary complex number, then the function . This function is periodic, and it means that . It does mean that . That may happen if or T is a fundamental period .

The signals and have the same fundamental period. The exponential function also can be written in the form . As exponential function is periodic, then , then where k is an integer, and where k is an integer. We can also include a new term of harmonically related functions , where k is an integer with fundamental frequency .

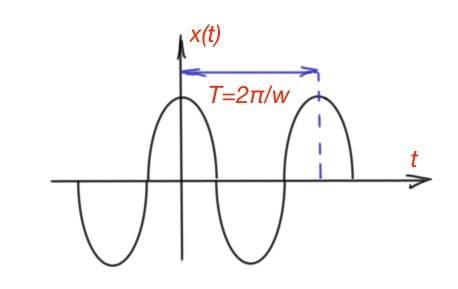

The other type of electromagnetic signal is a sinusoidal signal . The angular frequency here is , where is a frequency, and is a phase shift. Sinusoidal signals are also periodic functions with a fundamental period . A sinusoidal signal can be written in the following form: . And also from the stated above (Figure 4).

The total energy of a periodic exponential function over the period T is . As there is an infinity quantity of the periods in the sin/cos function, so the total energy of exponential or sinusoidal function is infinite. The average power is . The average power of the exponential or sinusoidal function is still equal to 1, no matter how many periods there are.

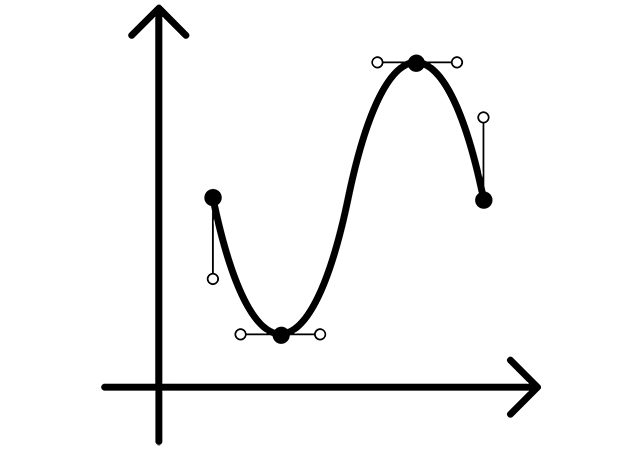

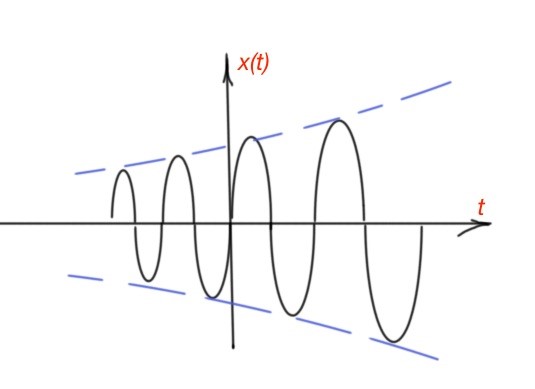

Let’s consider the most general complex exponential function where is a complex number and a is a complex number. So that , and . So there are three cases: , the function is pure sinusoidal. For the sinusoidal is inscribed in the increasing exponential function (Figure 5). For the sinusoidal is inscribed in the decreasing exponential function.

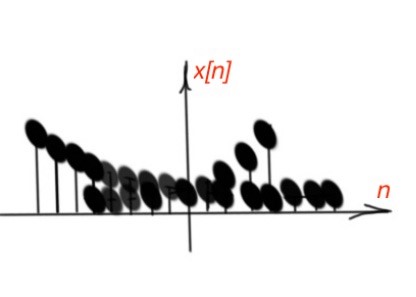

Discrete-time signals can also be complex sinusoidal and exponential. Discrete-time complex exponential signals are , C is a complex number, α can be complex or an integer. When and are real, the function decreases or increases exponentially, depending on the sign of .

The sign of the function magnitude varies depending on the sign of C. (Figure 6). If the α=jω is an imaginary number, then This function can be written as .

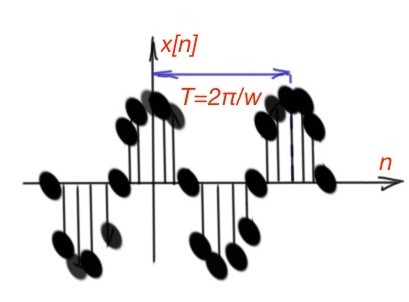

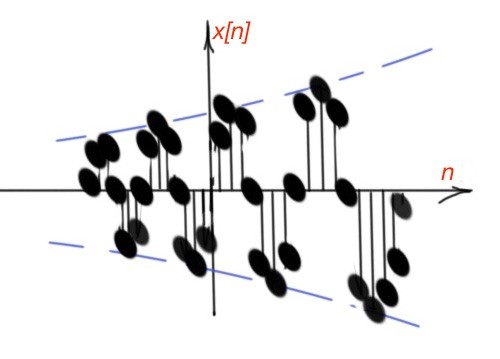

As with continuous-time functions, discrete-time periodic functions are characterised with infinite energy and finite average power. The discrete-time periodic function can be written as , where Figure 7 depicts different cases of this function behaviour.

The discrete-time complex exponential function is a periodic function, with period 2π, so And here is a big difference between discrete-time and continuous-time functions regarding periodicity. The continuous-time function will have distinct values for different values of frequency and so on. The discrete-time functions have the same values for different values of frequency and so on.

As far as discrete-time complex exponential function is periodic, then the following should be true: , where m is an integer. So the fundamental period for discrete-time function is . This statement also does not have the same meaning for continuous-time functions. Both functions should have an undefined fundamental period for fundamental frequency . For discrete-time functions we can also define harmonically-related functions as , for different

Classification of signals: Unit-step and unit-impulse functions

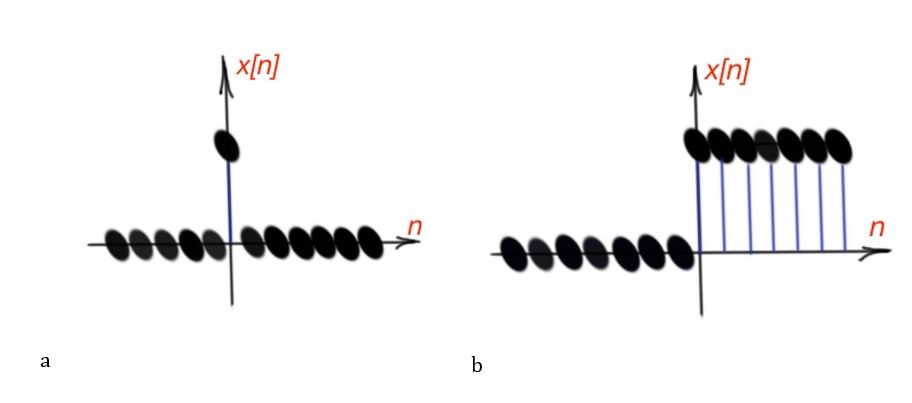

Another important type of signals are unit-step and unit-impulse signals. These signals can be continuous-time and discrete time functions. The discrete-time impulse function is . The discrete-time step function is .

These functions are shown in Figure 9. There is a direct relationship between discrete-time unit step and pulse functions – the discrete-time unit impulse is the first difference of discrete-time unit step, . The unit step function is the running sum of the unit impulse function .

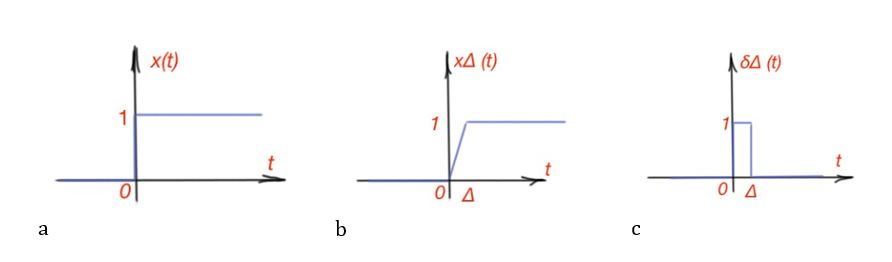

The unit impulse and unit step functions are related to the following: . Continuous-time functions can also be unit pulsed and step. They are represented similarly as: , the unit-impulse continuous-time function is discontinuous at .

They are related to each other: and reverse . But there is an important note – since the impulse function is undefined at , we must calculate the derivative for at . So it can be represented with the following way: .

Graphically unit-step and impulse-step functions are presented in Figure 10. The impulse-unit continuous-time function is . The impulse-unit function can also be scaled . Another interpretation of unit-step function with the time shift, if we represent , then . Similarly to discrete-time function .

Continuous-time and discrete-time electromagnetic systems

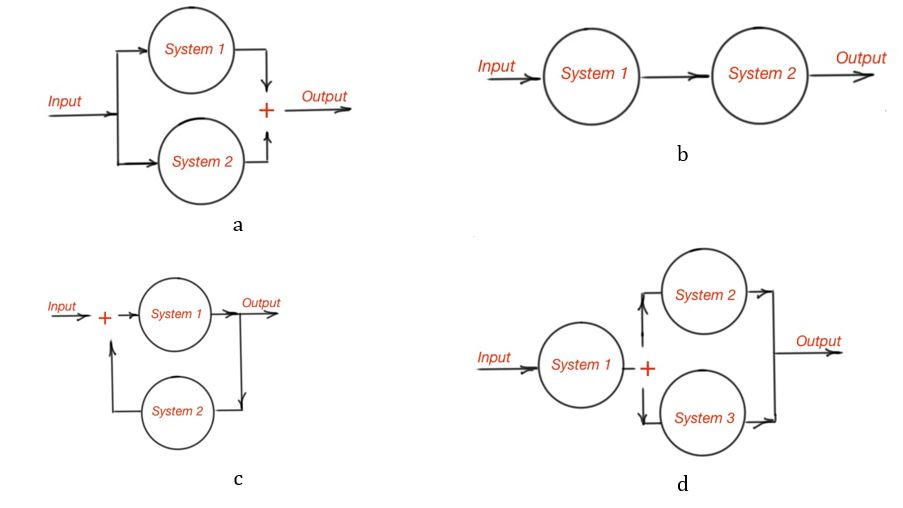

This system is a process where the input signal is transformed in some specific way by the system, resulting some specific output signal. Continuous-time system is a system where the continuous-time signal is applied and results in continuous-time output signals. The discrete-time system is a system that transforms discrete-time input signal into discrete-time output signal. Very often real systems are complex and are built as interconnections of simple subsystems. The types of interconnections are the series or cascade interconnection, the parallel interconnection, the series-parallel interconnection and feedback interconnection. All these interconnections are depicted graphically in Figure. 11.

System properties

This system is called memoryless when the output of the system for independent variables is dependent only on the input at the same moment of time. So, memoryless systems for continuous-time functions is , or discrete-time function is .

The simplest memoryless system is an identical system when the output is identical to the input, , or . A discrete-time system with memory is a summer , or it is also called an accumulator. Another example is delay, when . The memory function of the system is the option of the input data storing at the moment that is different from current moment. Here is the memory concept on the summer :

A system is invertible if certain values of the input leads to certain values of the output. The invertible system is characterised with the inverse system. The inverse system is the system cascaded with the original system leading to a result equal to the input. For example, is invertible system. The is not an invertible system, as from here we can’t clearly know the sign of the original system.

The casual system is a system where the output values of the current time depends only on the value of the input at current time and previous time.

A system is called stable when the small inputs does not lead to output diversity.

A system is time invariant if the characteristics or behaviour of the system are fixed in time. The time shift of the input should provoke an identical time shift of the output.

A system is linear if the system input consists of the sum of the inputs, so the system output consists of the sum of outputs, where every output is the response of a particular input. Two features of the linear system is shown below. Let’s suppose that is the output for the input and the is the output corresponding to the input . So the outputs:

- For is the (additivity property);

- For is , where is any complex number (scaling property).

Here we can formulate the superposition property. If the set of discrete-system inputs , for and the response of linear combination of these inputs are given by the sum , where is an any complex number, then the superposition of outputs is . The particular result of this feature is that if the input of the system is 0, then the output of the system will be 0 too.

More educational tutorials can be accessed via Reddit community r/ElectronicsEasy.