Tabletop setup provides more nuanced picture of heat production in microelectronics.

Today’s computer chips pack billions of tiny transistors onto a plate of silicon within the width of a fingernail. Each transistor, just tens of nanometers wide, acts as a switch that, in concert with others, carries out a computer’s computations. As dense forests of transistors signal back and forth, they give off heat — which can fry the electronics, if a chip gets too hot.

Manufacturers commonly apply a classical diffusion theory to gauge a transistor’s temperature rise in a computer chip. But now an experiment by MIT engineers suggests that this common theory doesn’t hold up at extremely small length scales. The group’s results indicate that the diffusion theory underestimates the temperature rise of nanoscale heat sources, such as a computer chip’s transistors. Such a miscalculation could affect the reliability and performance of chips and other microelectronic devices.

“We verified that when the heat source is very small, you cannot use the diffusion theory to calculate temperature rise of a device. Temperature rise is higher than diffusion prediction, and in microelectronics, you don’t want that to happen,” says Professor Gang Chen, head of the Department of Mechanical Engineering at MIT. “So this might change the way people think about how to model thermal problems in microelectronics.”

The group, including graduate student Lingping Zeng and Institute Professor Mildred Dresselhaus of MIT, Yongjie Hu of the University of California at Los Angeles, and Austin Minnich of Caltech, has published its results this week in the journal Nature Nanotechnology.

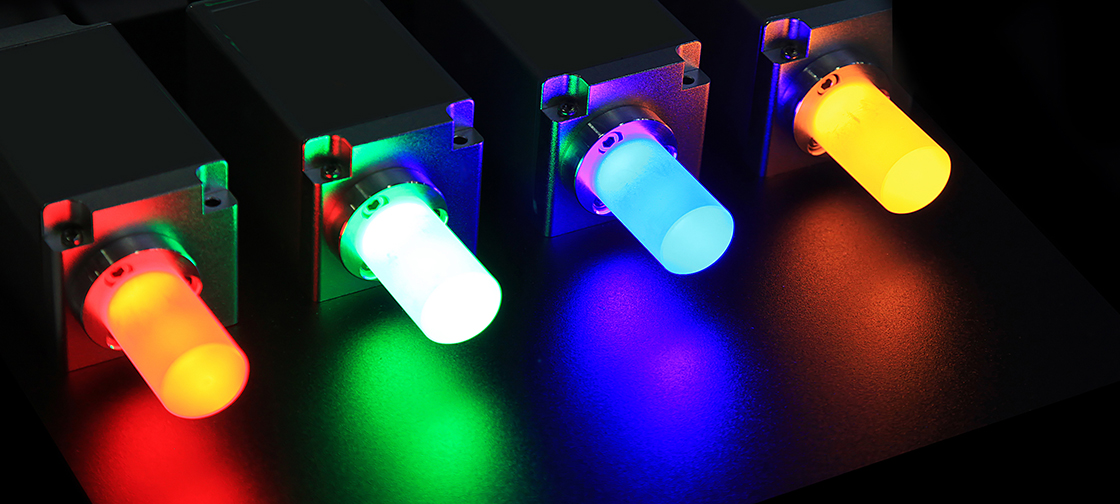

Credit: “A new tool measures the distance between phonon collisions”, Jennifer Chu, MIT News Office