This post covers the topic of three types of cache memory, its structure and functions. Cache memory is a set of memory locations that serves fast access applications. Cache memory can store both data and instructions. Both data cache and instructions cache are increasing performance of a processor.

Structurally cache memory consist of sub-banks. Each sub-bank of cache consists of ways, that are made of lines. Lines consist of collections of consecutive bites.

Ways and lines are forming locations where data and instructions are stored in cache.

Processor uses tag arrays to find information in cache. Every line in cache has tag arrays. Tag array indicates if researched information exists in cache. Another thing processor check is validity bit to see if the cache line is valid or invalid. If it is valid – then processor can use information found in cache.

The term cache hit means the data or instruction processor need is in cache, cache miss – in the opposite situation.

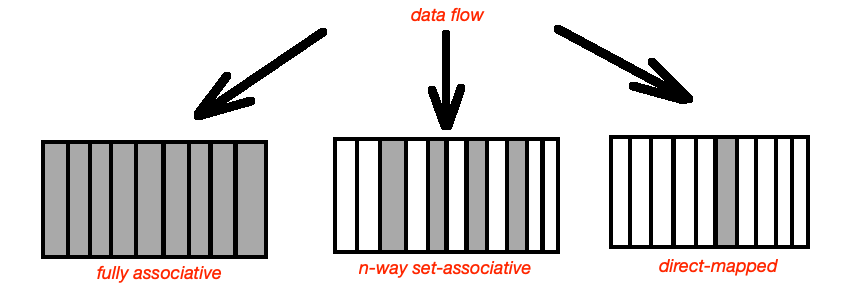

There is three types of cache:

- direct-mapped cache;

- fully associative cache;

- N-way-set-associative cache.

In a fully associative cache every memory location can be cached in any cache line. This memory type significantly decreases amount of cache-line misses, considered as complex type of cache memory implementation.

In direct-mapped cache cache memory location maps to a single cache line. It can be used once per address per amount of time. Performance of this cache memory type is lower than others.

In N-way-set-associative cache, the most common cache implementation, memory address can be stored in any N lines of cache.

When accessing cache memory processor realises that all cache line are valid, then least recently used algorithm (LRU) starts. Data, that was not accessed longest time is replaced with new data.

Big choice of cache memory components can be found at Digi-Key Electronics.

Source: “Embedded hardware: know it all”, Jack Ganssle, Tammy Noergaard, Fred Eady, Creed Huddleston, Lewin Edwards, David J. Katz, Rick Gentile, Ken Arnold, Kmal Hyder, Bob Perrin.